Here’s the first DigitalLabs project opportunity of the summer! Fancy helping us out? Check out the posting on Jobs4Students.

Archives for April 2018

We’re Recruiting! Internships available soon.

Early Warning!! We will be looking to fill 2 internships, shortly. Don’t go away! We’ll post more information as we get it.

GM Higher: Augmented Reality for Animators

Greater Manchester Higher‘s booklet needed a helping hand from DigitalLabs to reach the app-loving younger generation; Sound, animation and Augmented Reality to the rescue, thanks to a team of students from the School of Art

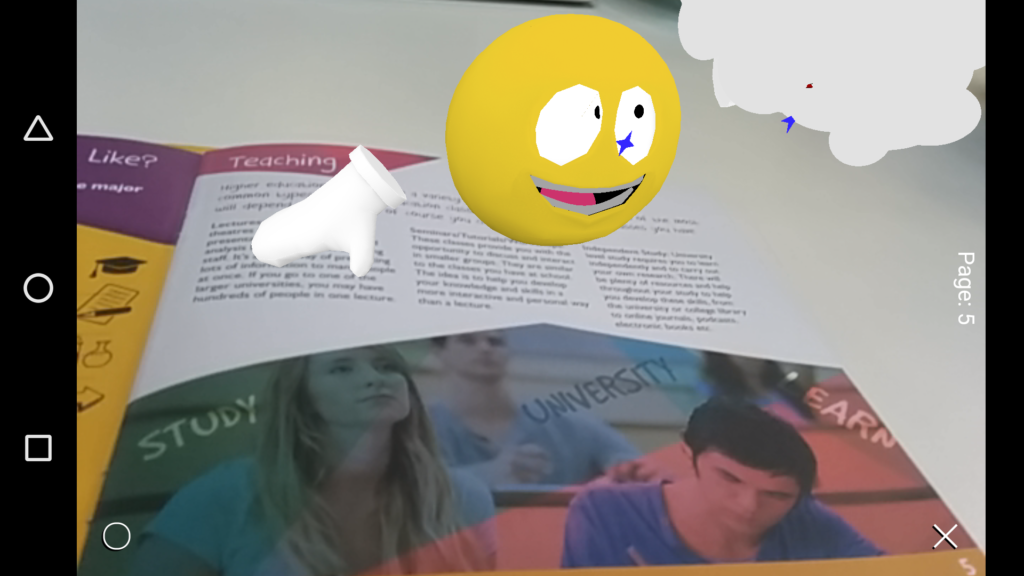

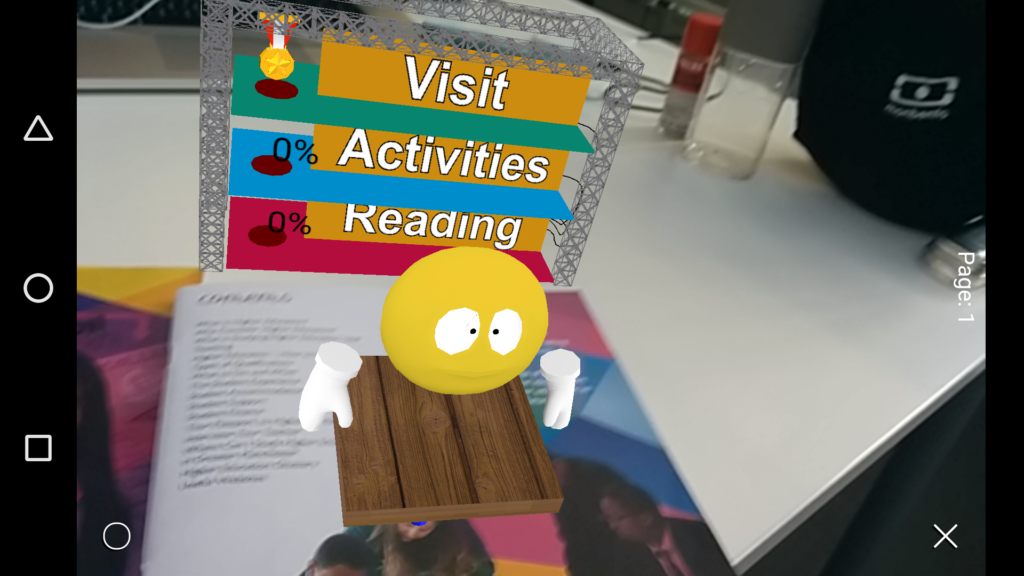

We needed to create a fully-interactive layer on top of the existing book, without making any changes to the existing print stock. We realised this would be a perfect fit for ‘Augmented Reality’ (AR), the mixed-reality version of (the exciting re-issue of 1990s fad) Virtual Reality. AR would allow us to interact with the book, layering on interactive elements and feeding back information that would help the book owner get the most from it. To ease this process, a character would guide the reader through the activities, letting the reader know how much of the book they had completed and what they should do next.

Developing the team: Digital Labs and MMU Students

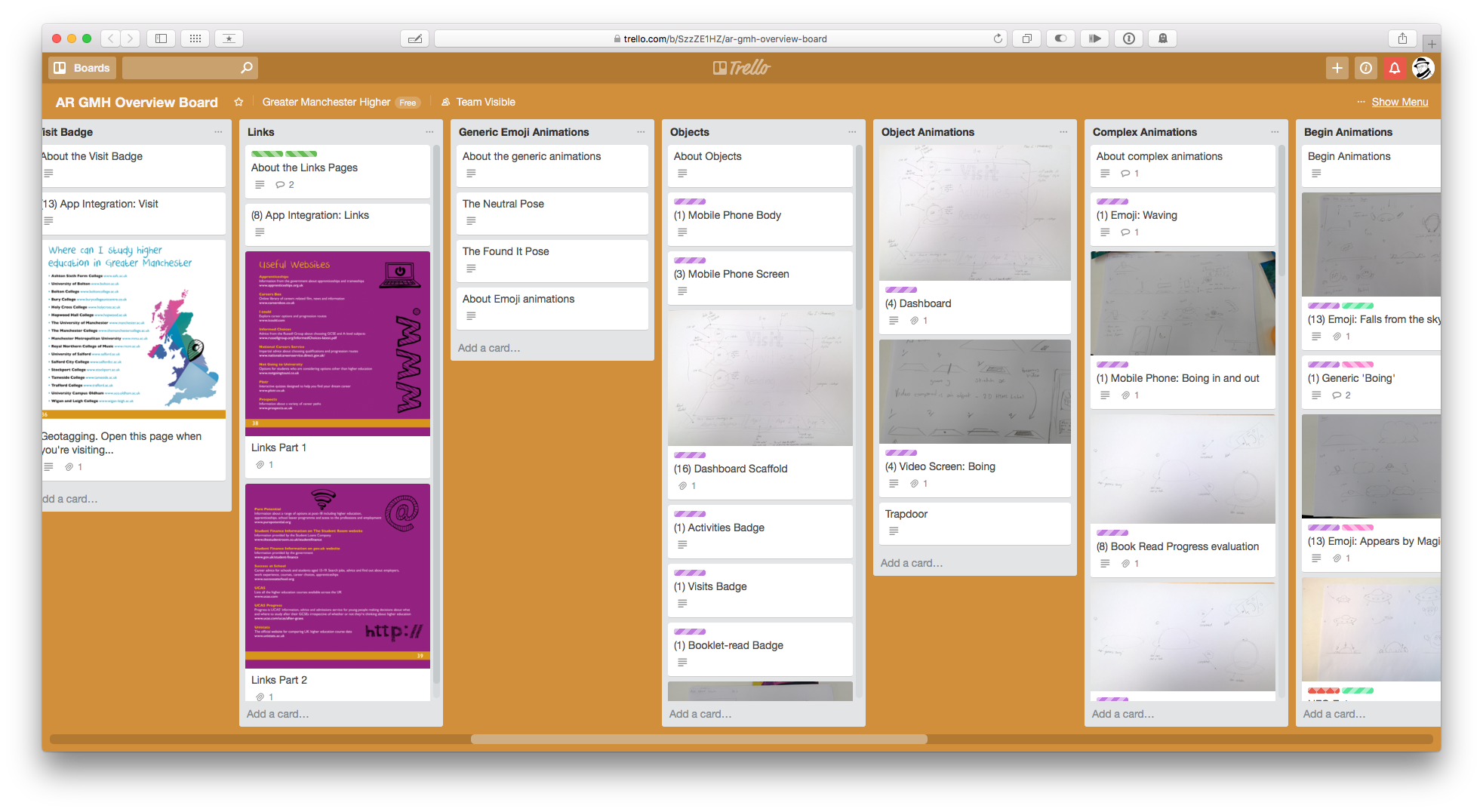

Technology aside, we would need to find a number of collaborators to develop the character and animated sequences. Luckily, MMU being the broad church it is, we had the Jobs4Students programme and a whole School of Art to work with. We posted positions up and ended up taking on a small team of animation students – Phil, Federico and George .

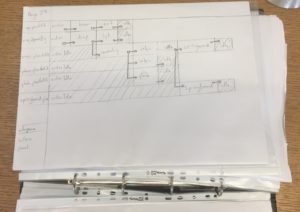

The project would take in a range of creative skills from an early stage; we would need to conceptualise the interaction and storyboard it for each page in the booklet. Models and rigging would need to be made before any scene building animation could take place, and all of this would need to be considered as being rendered in real-time on a small screen with a 360° view on six axes.

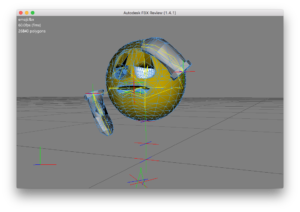

We chose to animate an ‘emoji’ character, building on the anticipated popularity of what would turn out to be 2017’s Razzie winner The Emoji Movie and avoiding a gendered, racially-identifiable character who would still be relatable and familiar to the target audience for the app; additionally, a certain amount of metatextual play could take place.

George developed sketches, looking at ways the character could re-use sequences to perform Hannah-Barbariatric surgery on our storage usage, and Federico built up the character geometry, exploring the skeletal constraints and animation fidelity between Maya and the AR animation engine we would be using.

We had developed an in-house animation scripting system for AR; we developed a diagramming system to help structure our animation sequences, with the relationships between actors on the stage, potential outcomes and decisio-nmaking flows, and a clear means to transcribe animation flows for our system.

As part of the interactive experience, the reader can interact with the virtual environment on the phone or tablet. Buttons and other interactive elements needed to appear on the booklet and be precisely aligned to match the positions of printed elements in the book. We built a tool in MaxMSP to generate the code we’d need, allowing us to precisely align the buttons visually – this threatened to be a huge time-sink otherwise.

An off-syllabus world of the unknowable

There were new challenges the animators found themselves having to confront in adjusting from making rendered sequences to creating real-time rendered assets, and these challenges came on many fronts. Instead of render farms to handle any level of complexity and pre-processing of assets, the models had to be processable in real-time on a range of device capabilities and capacities.

This meant simplifying geometry and optimising for perception, which was compounded by the discrepancy in Maya’s animation toolkit and what survived on export to a real-time device. This required a certain amount of creative ingenuity, backed up by an in-depth technical exploration of possible solutions.

This was a repeated pattern as we worked around texturing and lighting for AR environments, as well as dealing with a stage that had to interact with an environment that both was and wasn’t there, and could be looked at from almost any angle.

We had to work through sizing issues, balancing an on-screen presence against making things clickable and interactive without adversely impacting the pages on which these things interacted.

Our final technical job was data export and scene management, which depended on Maya’s Game Exporter to bundle multiple takes and animations together; this would force the animation team to take on the mindset of game asset creators, and make decisions about how best to organise and structure the data to minimise size whilst preserving flexibility.

Typical animation courses will look at traditional output – linear animation with controlled cameras. This project provided experience with the opportunities (and limitations) provided by AR experiences and viewer-controlled camera systems. With both Apple and Google investing heavily in AR on their platforms, this experience provided an opportunity to develop skills for the future whilst working in-house with a hands-on technical team.

No animated experience is complete without supporting audio. Mercifully, we’d managed to avoid lip syncing, but we produced a restrained soundscape for the AR experience; while masked, the member of staff who voiced ‘emoji’ is still with us and requested to remain nameless.

The fruit of our labours

Despite a number of technology implementation hiccups, we implemented a cross-platform AR application with interactive elements, responsive scene animation, and a wide range of content sources (animations, HTML displays, and videos).

The animation team learnt about building geometry and animations for real-time engines, and developed a better understanding of the mechanics of both their 3D editing environments and small device rendering systems, as a consequence of having to maintain the animation quality of Maya on a significantly less powerful runtime. This involved developing techniques around animation and texture baking – the esoteric ends of the animation pipeline that’s typically outside pre-rendered animation approaches.

The University And Me booklet gained new skills, too. It could now provide feedback (in the form of buttons) about which sections were important for different age groups, and let readers click those buttons and have the app remember which activities had been done already. An animated dashboard showed how much of the booklet they’d completed, and what activities they still had to do. It could even keep track of which locations they’d visited and mark them off automatically in a list.

We felt the overall aim of developing an App that would reinforce – not replace – the book had been achieved. The App provided a literally singing and dancing layer on top of the booklet, allowing students to compare each others’ achievements with simple feedback and entertaining animation, providing an extra reason to hang on to the booklet… and maybe take a few cues from it.

Tales From The Narrator – Part One

Although the DigitalLabs team have been busy working on projects and teaching students, we haven’t been publicly sharing what we’ve been up to. My role is to tell the story of DigitalLabs, where we are and where we’re going. We’re proud of our work and we’d like to share it. There are a number of things that make us different from other digital agencies. There are also a number of things that make us different from other university teams. We’re unique and its time we explained how. [Read more…] about Tales From The Narrator – Part One

Greater Manchester Higher – Increasing engagement through AR, activities and interaction

Greater Manchester Higher is an organisation providing information and guidance to school-age students interested in entering Higher Education in the region.

One of the ways they do this is by distributing a beautifully produced and comprehensive booklet. [Read more…] about Greater Manchester Higher – Increasing engagement through AR, activities and interaction